NVIDIA has made it clear that it will not be adopting High-Bandwidth Flash (HBF) memory technology, even as 4TB stack options begin to emerge. Instead, the graphics hardware giant plans to stick with its current High Bandwidth Memory (HBM) solutions. As first reported by Wccftech, this decision comes as the race for advanced memory solutions heats up, driven largely by the surge in artificial intelligence applications.

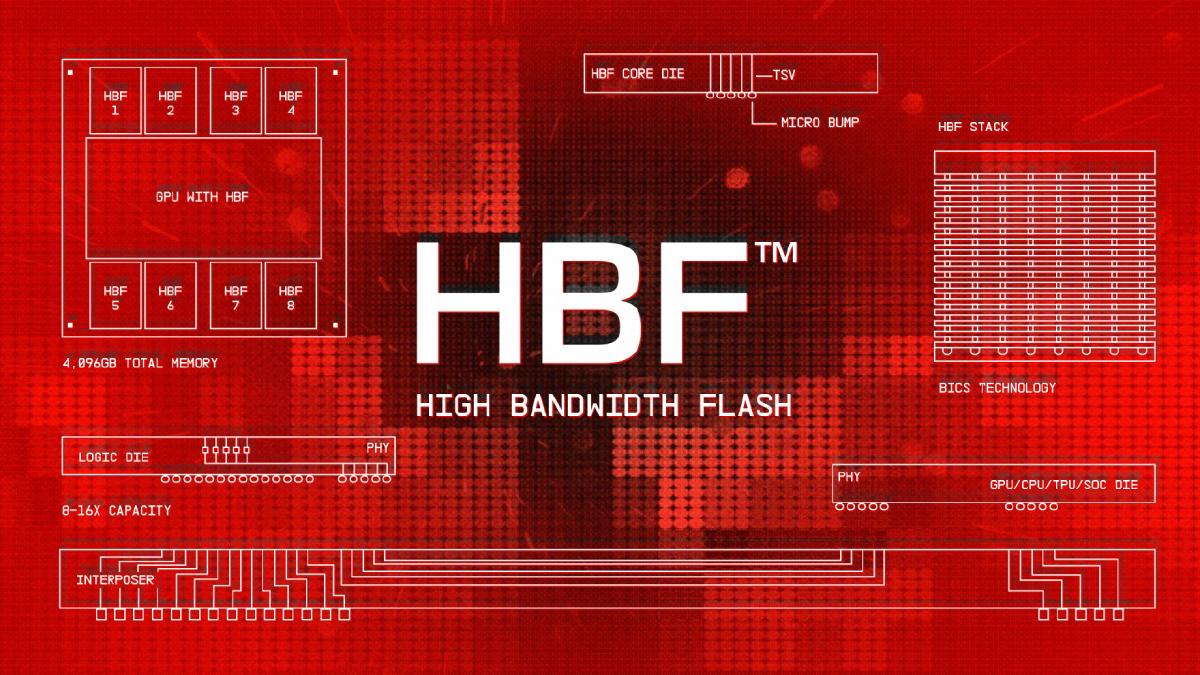

High-Bandwidth Flash is seen as a significant advancement in memory technology, designed to offer greater capacity than traditional HBM while providing improved performance. Co-developed by SanDisk and other partners, HBF aims to bridge the gap between HBM and NAND flash, potentially reshaping how memory is utilized in high-performance computing. With the growing demand for memory in AI workloads, HBF could serve as a critical component in future systems.

However, NVIDIA’s ongoing reliance on HBM indicates a cautious approach, likely influenced by the established performance metrics and reliability of its current memory solutions. HBM has long been a staple in high-end graphics cards and computing systems, particularly for applications requiring substantial bandwidth such as gaming, AI, and scientific simulations.

While HBF technology is projected to begin sampling later this year, Google has emerged as a notable early adopter. The tech giant is expected to be one of the first companies to integrate this memory into its hardware, which could signal a broader shift in the industry. This early adoption may allow Google to leverage the benefits of HBF in its data centers and AI infrastructures, giving it a competitive edge in the fast-evolving tech landscape.

As the industry ramps up its focus on artificial intelligence, the need for advanced memory solutions becomes increasingly critical. The ability to process vast amounts of data with lower latency and higher efficiency could define the next generation of computing. For NVIDIA, sticking with HBM allows the company to maintain its current performance standards, but it also risks being left behind if competitors successfully implement HBF in their systems.

The tension between established technologies like HBM and emerging solutions such as HBF reflects the broader challenges facing hardware manufacturers today. As companies pivot to accommodate the demands of AI and machine learning, the choices they make regarding memory technology will have lasting implications for performance and efficiency.

In summary, while NVIDIA opts to remain loyal to HBM, the rise of High-Bandwidth Flash, particularly in the hands of Google, highlights the ongoing evolution in memory technology. As the landscape continues to shift, it will be essential to monitor how these developments influence both consumer hardware and enterprise applications.

NVIDIA, a leading player in the graphics and computing market, is known for its innovative GPUs that power everything from gaming to AI workloads. Meanwhile, Google is a tech titan focusing on cloud computing and AI, pushing the boundaries of what is possible with advanced memory technologies.

Image credit: Wccftech

This article was generated with AI assistance and reviewed for accuracy.